I have spent the last several years deploying machine learning models in production — at Bridgestone integrating ONNX-based pipelines into manufacturing quality control, at Health Pilot fine-tuning LLMs for Medicare plan recommendation. In both cases, I learned the same lesson: abstractions are excellent until something breaks, and when something breaks in production, "the library is doing something wrong" is not a useful place to be.

I recently needed on-device speech transcription running inside a Flutter iOS app — no server, no round-trip. I started with sherpa-onnx, a Flutter library that wraps the full Whisper inference pipeline behind a single OfflineRecognizer API. It worked, until it didn't: transcription started producing partial results — a few words, then nothing. I had no way to debug inside the abstraction. I didn't know whether the error was in the mel spectrogram, the tokenizer, or somewhere in the decoding loop.

So I rebuilt the entire pipeline by hand. This post is the full story: exporting Whisper Base from Hugging Face, quantizing it to INT8, and implementing mel spectrogram extraction, tokenization, and the autoregressive decoding loop in Dart — including streaming partial tokens to the UI as they're generated.

My PhD is in geophysics. Signal processing — Fourier transforms, spectrograms, frequency domain analysis — is familiar ground. That background helped. But there were still several non-obvious traps in this pipeline, and I want to document every one.

Why Whisper Base, and Why INT8

Whisper Base has 74 million parameters. It's small enough to run on a mobile CPU with acceptable latency and large enough to transcribe natural conversational speech with reasonable accuracy. The Tiny model (39M) is faster but noticeably weaker on domain-specific or technical vocabulary. Base is the practical sweet spot for most mobile use cases that care about accuracy.

The FP32 model files from a standard Hugging Face export are too large for practical mobile deployment:

| File | FP32 size |

|---|---|

encoder_model.onnx | 82 MB |

decoder_model.onnx | 208 MB |

decoder_with_past_model.onnx | 196 MB |

That's roughly 486 MB before tokenizer files. With INT8 dynamic quantization:

| File | INT8 size |

|---|---|

encoder_model_int8.onnx | 26 MB |

decoder_model_int8.onnx | 53 MB |

decoder_with_past_model_int8.onnx | 49 MB |

128 MB total — about 26% of the original. A reasonable footprint for bundling in a mobile app.

Step 1: Export to FP32 ONNX

I use HuggingFace's optimum library to handle the ONNX export. The setup:

pip install optimum[onnxruntime] onnxruntime

python -m optimum.exporters.onnx \

--model openai/whisper-base \

--task automatic-speech-recognition-with-past \

--opset 17 \

outputs/whisper-base-fp32/

The --task automatic-speech-recognition-with-past flag is critical. It exports three ONNX files instead of one:

encoder_model.onnx— processes the mel spectrogram into encoder hidden states. Runs once per transcription call.decoder_model.onnx— the first decoder step: takes the initial prompt tokens (no KV cache) and returns logits plus the initial key-value cache.decoder_with_past_model.onnx— all subsequent decoder steps: takes a single token plus the accumulated KV cache, returns updated logits and the new KV cache.

The split between decoder and decoder_with_past is the standard KV cache optimization. Without it, generating every new token would require reprocessing all previous tokens from scratch — O(n²) cost instead of O(n).

Step 2: Dynamic INT8 Quantization

I use onnxruntime.quantization.quantize_dynamic. Weights are quantized to INT8 offline; activations are quantized at runtime. No calibration dataset needed, which makes iteration fast.

The encoder requires special handling:

from onnxruntime.quantization import quantize_dynamic, QuantType

# Encoder: restrict to MatMul/Gemm only

quantize_dynamic(

"outputs/whisper-base-fp32/encoder_model.onnx",

"outputs/whisper-base-int8/encoder_model_int8.onnx",

weight_type=QuantType.QInt8,

op_types_to_quantize=["MatMul", "Gemm"],

)

# Decoders: all ops

quantize_dynamic(

"outputs/whisper-base-fp32/decoder_model.onnx",

"outputs/whisper-base-int8/decoder_model_int8.onnx",

weight_type=QuantType.QInt8,

)

quantize_dynamic(

"outputs/whisper-base-fp32/decoder_with_past_model.onnx",

"outputs/whisper-base-int8/decoder_with_past_model_int8.onnx",

weight_type=QuantType.QInt8,

)

The encoder restriction matters on iOS. Whisper's encoder uses two Conv1D layers at the front to process the mel spectrogram. Full dynamic quantization converts these to ConvInteger operations — an op not included in ONNX Runtime's mobile build for iOS. Restricting to MatMul and Gemm leaves those Conv1D layers in FP32, keeping iOS compatibility while still quantizing the larger attention weight matrices where the bulk of the compute happens.

The decoders are purely attention-based (no Conv layers), so full quantization is safe.

Step 3: The Mel Spectrogram — Where My Geophysics Background Helped (and Still Tripped Me)

This is where most of the complexity lives. Whisper expects a specific log-mel spectrogram as its encoder input:

- 16 kHz mono audio, padded or truncated to 30 seconds (480,000 samples)

- 400-point real FFT (n_fft = 400), giving 201 frequency bins

- 160-sample hop length (10ms windows)

- 80 mel filterbanks using Slaney area-normalized weighting

- 3000 time frames

- Log normalization: clamp to (max − 8.0), shift by +4, scale by ¼

I've been computing spectrograms since my PhD work in geophysics — seismic signal analysis involves the same basic tools. So when the first transcription attempts produced garbage, I knew exactly where to look. The problem was not the signal processing logic. It was the FFT size.

Whisper uses n_fft = 400, not 512. This is the trap. Every standard FFT library defaults to power-of-two lengths. If you use n_fft = 512 (or let a library choose it for you), you get a different frequency axis, and the 80-mel filterbank that maps those frequencies to mel bins is completely misaligned. The model receives a spectrogram that looks plausible but is wrong — and the encoder produces encoder hidden states that are just noise.

Confirm the FFT size directly from the model's feature extractor configuration:

from transformers import WhisperFeatureExtractor

fe = WhisperFeatureExtractor.from_pretrained("openai/whisper-base")

print(fe.n_fft) # 400, not 512

Because 400 is not a power of two, I can't use a standard FFT library in Dart. I precompute a direct DFT twiddle table in the worker isolate — the table is computed once per isolate lifetime and reused across all frames:

static const int _nFft = 400;

static const int _nBins = 201; // n_fft / 2 + 1

Float64List _buildCosT() {

final t = Float64List(_nFft * _nBins);

for (var k = 0; k < _nBins; k++) {

for (var n = 0; n < _nFft; n++) {

t[k * _nFft + n] = math.cos(2 * math.pi * k * n / _nFft);

}

}

return t;

}

One optimization that made a real difference: for a 5-second recording, only about 520 of the 3000 mel frames contain real audio. The remaining 2480 frames are zero-padded and their DFT output is trivially zero. Skipping those frames reduces Dart-side compute by about 83%:

final realLen = audio.length.clamp(0, _nSamples);

final lastRealFrame = min(_nFrames, (realLen / _hopLength).ceil() + 2);

for (var f = 0; f < lastRealFrame; f++) {

// Hann window + 400-point DFT for this frame

}

// Frames lastRealFrame..2999 are left at zero

The mel filterbank itself I load from a pre-exported JSON file (mel_filters.json) rather than computing from the Slaney formula in Dart. The filterbank is the exact output of WhisperFeatureExtractor — shape [80, 201], float64. Computing it from scratch in Dart with exact numerical parity is possible but fragile; loading the pre-exported values eliminates floating-point divergence at the source.

python -c "

from transformers import WhisperFeatureExtractor

import json

fe = WhisperFeatureExtractor.from_pretrained('openai/whisper-base')

json.dump(fe.mel_filters.tolist(), open('mel_filters.json', 'w'))

"

This file goes into the app bundle alongside the ONNX models.

Step 4: The Tokenizer and the Token ID That Cost Me Hours

Whisper uses GPT-2 byte-level BPE tokenization. In Dart, I load the vocabulary JSON directly, map token IDs to their vocabulary strings, concatenate them, and decode through the GPT-2 byte-to-Unicode mapping.

The initial decoder prompt that seeds the autoregressive loop:

| Token | ID | Meaning |

|---|---|---|

<|startoftranscript|> | 50258 | Always first |

<|en|> | 50259 | English language |

<|transcribe|> | 50359 | Transcription task |

<|notimestamps|> | 50363 | Suppress timestamp tokens |

<|endoftext|> | 50257 | Stop — generation ends here |

The critical one: <|transcribe|> is token 50359, not 50360. Token 50360 is <|startoflm|> — it puts the decoder into language model mode rather than transcription mode. In language model mode, the decoder generates a few tokens and stops early. This is the exact bug that was producing my partial transcriptions — first few words and then nothing. The model was not broken. I was sending it the wrong task token.

I confirmed this by checking added_tokens.json in the exported model directory, which is the authoritative source:

{"<|transcribe|>": 50359, "<|startoflm|>": 50360}

Never trust documentation for special token IDs. Always verify against the file in the model directory.

Step 5: The Autoregressive Decoding Loop in Dart

Once the encoder has processed the mel spectrogram into a [1, 1500, 512] hidden state tensor, the decoder runs a standard autoregressive greedy loop.

The first step uses decoder_model_int8.onnx — it takes the prompt tokens (no KV cache) and returns both the initial logits and the full set of key-value cache tensors. All subsequent steps use decoder_with_past_model_int8.onnx.

One thing I verified carefully from the model's output names: decoder_with_past only outputs decoder KV tensors (present.X.decoder.*). It does not re-output encoder cross-attention KVs. Those encoder KV tensors are computed once in the first decoder step and held constant for the entire generation:

// After first decoder step, split KV cache by type:

final encKv = <String, OrtValue>{};

final decKv = <String, OrtValue>{};

for (final entry in dec0Outputs.entries) {

if (entry.key.contains('.encoder.')) {

encKv[entry.key] = entry.value; // Static — same for every step

} else {

decKv[entry.key] = entry.value; // Updated each step

}

}

// Autoregressive loop:

for (var step = 0; step < maxTokens; step++) {

final feed = {'input_ids': nextTokenTensor};

feed.addAll(encKv); // same every step

feed.addAll(decKv); // grows each step

final out = await decWithPastSession.runAsync(null, feed);

// Update decKv from present.X.decoder.* in output

// Pick next token from argmax of logits

if (nextToken == tokEot) break;

}

I confirmed the output key naming by running --inspect on the models in Python and printing every input and output name before writing a single line of Dart code. That kind of sanity check, which takes five minutes, would have saved me hours of debugging later.

Step 6: Streaming Partial Tokens to the UI

Full transcription of a 5-second clip takes several seconds on device. If I wait for the final result, the UI appears frozen. The solution is sending each decoded token back to the main isolate immediately via the isolate's SendPort:

generated.add(nextToken);

final partial = tokenizer.decode(generated);

onPartial(partial); // → sendPort.send(_PartialInferResponse(id, partial))

In the UI, the input field fills in progressively as the decoder runs:

final result = await asrService.transcribeFile(

wavPath,

onPartial: (partial) {

if (mounted) setState(() => _inputController.text = partial);

},

);

The latency numbers don't change, but the perceived experience is completely different. You stop recording, and within about a second the first words appear. The text builds up character by character as the decoder works through the tokens. It feels like the app is actively processing rather than frozen.

Intermediate state is almost always more valuable to show than a spinner followed by a final result — the latency is the same, but the experience is not.

Step 7: Model Provisioning on iOS

ONNX Runtime requires filesystem paths. Model files can't be loaded directly from Flutter's asset bundle. On first launch I copy them to the application support directory:

final supportDir = await getApplicationSupportDirectory();

final modelDir = Directory(p.join(supportDir.path, 'whisper_onnx'));

await modelDir.create(recursive: true);

for (final filename in modelFiles) {

final dest = File(p.join(modelDir.path, filename));

if (await dest.exists()) continue; // skip on subsequent launches

final bytes = await rootBundle.load('assets/models/$filename');

await dest.writeAsBytes(

bytes.buffer.asUint8List(bytes.offsetInBytes, bytes.lengthInBytes),

flush: true,

);

}

Files required in the app bundle:

encoder_model_int8.onnx— 26 MBdecoder_model_int8.onnx— 53 MBdecoder_with_past_model_int8.onnx— 49 MBmel_filters.json— 88 KBvocab.json— 1 MB

Total on-device footprint: ~130 MB. Subsequent launches skip the copy entirely.

What I Would Do Differently

Test against the Python reference at every step. I wrote a Python test script that computes the mel spectrogram and runs the full Whisper inference with onnxruntime. Before debugging anything in Dart, I first confirmed the Python reference was producing the correct output. Only then did I compare Dart outputs frame by frame against it. Debugging a Dart implementation against a broken Python reference is circular and miserable.

Verify token IDs from added_tokens.json, not documentation. I said this above but I want to say it again: the single hour I spent debugging "first few words only" would have been five minutes if I had checked the JSON file first.

Profile on device before optimizing. The encoder dominates — it processes a fixed [1, 80, 3000] tensor regardless of audio length, taking 1-3 seconds on an iPhone 14. The frame-skip optimization I built for the Dart mel spectrogram computation was real but didn't meaningfully affect total latency because the bottleneck is the ORT encoder session. Metal or the Apple Neural Engine via CoreML EP would help the encoder significantly, but that's a future optimization.

The direct implementation is worth it when correctness matters. Building the pipeline without framework abstractions means I understand every step and can debug any step. That understanding paid off — I fixed three bugs that would have been invisible inside a library.

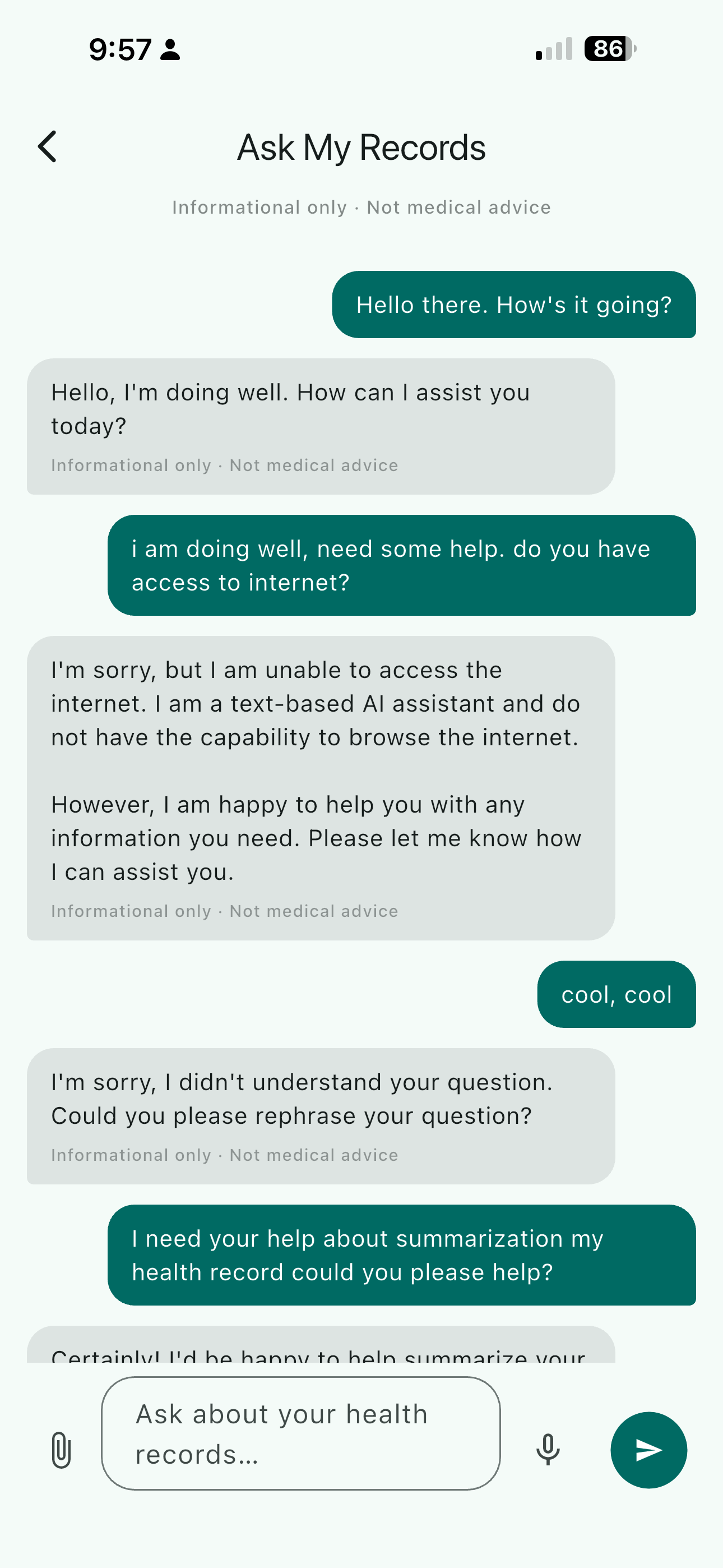

Where This Is Useful

On-device speech transcription without a server has obvious value anywhere privacy matters: healthcare notes, legal recordings, personal journaling, anything where sending audio to a remote endpoint is unacceptable or impractical. The healthcare use case is particularly compelling — someone describing symptoms, medication changes, or lab values shouldn't need to trust a cloud provider with that audio. The pipeline described here runs fully offline, completing transcription within a few seconds of stopping a recording, with no data leaving the device.

The next post in this series covers pairing this transcription pipeline with an on-device LLM: quantizing Qwen2.5-1.5B-Instruct to INT4 using Microsoft Olive and running it on iOS for local language understanding.